Agitated Clusters of Comforting Rage

The internet is making us hate each other. Why do we keep letting it?

In 1954, two sociologists descended on the communities of Craftown, New Jersey and Hilltown, Pennsylvania.

The researchers, Paul Lazarsfeld and Robert Merton, had a list of questions. Amongst them: Could you tell me who your closest friends are? Do you think Black and white people should live together in housing projects? On the whole, do you think that Black and white residents in the village get along pretty well, or not so well?

The sociologists worked to map a social network of these villages. Did the residents’ friends live in the same community? Did they pray the same way? Did they share their political views with their friends?

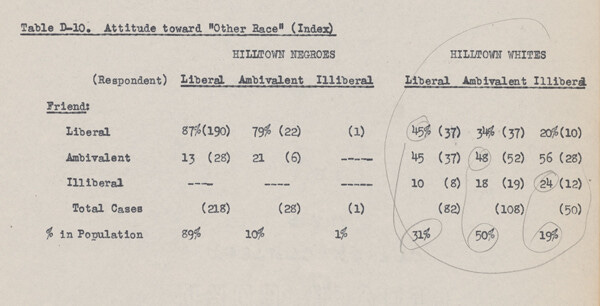

As they probed the political values of these residents, they separated them into three groups: Liberal, illiberal, and ambivalent. Liberals, broadly speaking, were pro-integration. Illiberals were pro-segregation. The ambivalent had indifferent or complicated views on the matter.

The sociologists had chosen Craftown and Hilltown1 for very particular reasons: Craftown was uniformly white. Hilltown was multi-racial.2

In essence, the researchers had set out to study an old bit of wisdom: Do birds of a feather really flock together?

But, really, they accepted such an axiom as a foregone conclusion. They wanted to know why and how they flocked together. Did these people pick their friends based on ascribed status, such as race, gender, age? Or around acquired statuses: Political inclination, class, values?

Their findings caused quite a stir. “The more cohesive community of Craftown,” the all-white village, “consistently exhibited a lower degree of selection of status-similars as friends.” That is, they didn’t seem to care much about race or age. Where they did discern, it related to traits “resulting from the individual’s own choice or achievement.”

In Hilltown, on the contrary, selectivity was much more marked in terms of ascribed status, since there was less by way of overarching community purposes to focus the attention of residents on locally achieved or acquired statuses.

In other words: Residents of the segregated community of Craftown were much more concerned with what their neighbors had achieved, what art they hung on their walls, and how they carried themselves.

The residents of the Hilltown community, which had such a diversity of residents, tended to choose their friends by two metrics: Innate characteristics, like race; and then by values. Liberals over-selected other liberals as their friends; as did illiberals with other illiberals.

Shared values, the sociologists concluded, “make social interaction a rewarding experience, and the gratifying experience promotes the formation of common values.” But incompatible views strained those relationships, they concluded: “Some may deteriorate to the final point of dissolution.”

The findings of the study are disquieting. The data seemed to suggest that we are naturally inclined to join social fora full of people like us. That segregation was implicitly the norm. It suggests that if we are among other people like us, we tend to be more comfortable exploring our differences. If we are in mixed company, however, we tend to hunker down with those who are like us, eschewing those who look or think differently.

The researchers coined a term for it: Homophily, the preference for the same.

This was a pretty influential work of research: Google Scholar calculates that more than 4,000 other papers cited Lazarsfeld and Merton’s findings. But it took on a whole new life at the dawn of the social internet. A 2001 paper, “Birds of a Feather: Homophily in Social Networks” approvingly cites Lazarsfeld and Merton's work, but underscored how hard it can be to understand complex social systems without a significant amount of data, collected over a long period of time. Friendship and homophily, in essence, is more complicated than a short questionnaire can truly grasp. That paper was cited more than 22,000 times in its own right.

A certain trillion-dollar company3 was very taken by such an idea. In fact, they would make it the core of their business.

But wind the clock back to 1954, to a footnote in Lazarsfeld and Merton’s influential study, and you’ll discover a dark secret at the heart of homophily.

When it comes to their study of Hilltown, the supposedly polarized and homophilic integrated community, they write, “we must here confine our inquiry to white residents, since there are loo few illiberal and ambivalent Negroes with friends in Hilltown to allow comparative analysis.” In other words: The Black residents were too decidedly anti-segregation for their views to matter, apparently.

What’s more, the proclamation that these white illiberals gravitated to other white illiberals was shaky, at best. Of the hundreds of white residents included in the study, the researchers found just 12 cases of illiberals befriending other illiberals.

The researchers promised a follow-up report to explore those issues, but it was never published. So this deep rot at the heart of this massive idea was let to fester.

This week, on a very special Bug-eyed and Shameless: The story of how some shoddy research on homophily created Facebook and nearly destroyed democracy.

And there’s still time for it to finish the job.

Today’s dispatch is a companion to Far and Widening: The Rise of Polarization in Canada, the report I’ve been working on since January with the Public Policy Forum.

If you’ve read Bug-eyed and Shameless recently, you’ll recognize some of the ideas and concepts — this newsletter has been a bit of a idea incubator, as I was trying to piece this study together. Some subscribers even contributed to a very helpful chat on some of these topics. (Again: Thank you!)

While it grapples with the specific Canadian instance of polarization, many of the trends and phenomena are universal, at least for other rich countries.

To kick off the launch of the report, I joined the CBC’s Front Burner podcast and The Andrew Lawton Show — two very different shows — to discuss different aspects of our current polarization problem.

I first heard about this hopelessly compromised Craftown-Hilltown study last March, in Ottawa, for the soft launch of Far and Widening. Wendy Hui Kyong Chun delivered a blistering review of homophily as freezing rain tapped on the windows of the third-floor ballroom at the National Arts Center.

Chun, director of the Digital Democracies Institute at Simon Fraser University, was one of the eight academics tapped by the Public Policy Forum to contribute to our study in polarization. And her work has been rattling around in my head ever since.

“Homophily launders hate into love by treating racism as a ‘naturally’ occurring preference within ‘human ecology,’” Chun argues in an interview conducted for our project. “It transforms individuals into ‘neighbors’ who naturally want to live with people ‘like them’; it presumes that consensus stems from similarity; it makes segregation the default.”

There is, in short, something desperately wrong with homophily. While the broad strokes may be true — you are, of course, more likely to befriend people of your age, of your class, with similar interests — the idea that creating a community uniformly of people like you is a natural human habit, and that value homophily is a powerful gravitational pull are much shakier precepts.

But, as Chun explains, the invented notion of homophily has informed so much of our modern world. The very premise of the algorithmic internet is to serve us content that it thinks we want — based on what people who fit our demographics like, or hate. In so doing, the companies that make up this infrastructure tried to nudge us into artificial communities defined by that love and hate.

The process of polarization — and the transformation of mass media into new media — recalls the classic physics experiment in which a solid mass of inert iron pilings is magnetized and pulled into clustered networks. The similarly charged filings gathered at either pole also repel each other, but they are stuck together by their overwhelming attraction to their opposite. Sustaining this magnetic charge in usually non-magnetic materials requires a previously-charged magnet or a constant current. The “neighborhoods” discussed in these media are like these clusters of charged filings, in which similarly-charged shards are both repulsed and welded together through their overwhelming attachment to their opposite.

Similarly, networking algorithms can create agitated clusters of comforting rage, in which people who angrily repulse each other are held together by their overwhelming attraction/hatred of something else. Intriguingly, homophily is usually justified in terms of comfort—you’re allegedly more comfortable when you’re with people “like you.”

Enter Facebook.

In 2012, Facebook was still in its growth phase. It hadn’t yet gone public. It had only recently implemented its algorithmically-determined news feed, at least as we understand it today. On the company blog, data scientist Eytan Bakshy published a rejection of the idea that his platform had created information bubbles.

Bakshy looked at how people interact with their strong and weak ties. “Homophily suggests that people who interact frequently are similar and may consume more of the same information,” Bakshy notes. Your Facebook circle is, in theory, a product of homophily: You have befriended people who look, think, and act like you, this supposed law of nature holds. Who you opt to interact with should further cement that principle.

But that’s not what he found at all.

If a Facebook user saw news content from a weak connection, someone they may interact with just occasionally — a co-worker, a third cousin, someone who you added by accident because they share a name with your sister-in-law but who you’re not too embarrassed to unfriend — they were ten times as likely to share that article than were it to come from a complete stranger. They still shared content from their strong connections, but at a significantly lower rate.

“This may provide some comfort to those who worry that social networks are simply an echo chamber where people are only exposed to those who share the same opinions,” Bakshy wrote. It seemed like users were more intrigued by those outside their small circle than those in the inner sanctum.

That’s good. What Facebook did with that information was bad.

As the years went on, the company became obsessed with the idea of maximizing users’ time on the platform — in order to, of course, sell them the maximum possible number of ads.

In 2015, Bakshy and some colleagues published new findings, in the journal Science. They had set out to specifically measure the value homophily of their platform.

The results, yet again, showed that users tended to defy the idea that they want to be ensconced in a cocoon of like-minded people. Liberals had more liberal friends, yes; as conservatives did conservatives — but they maintained, on average, about 20% to 25% of friends of the opposite political affiliation.4

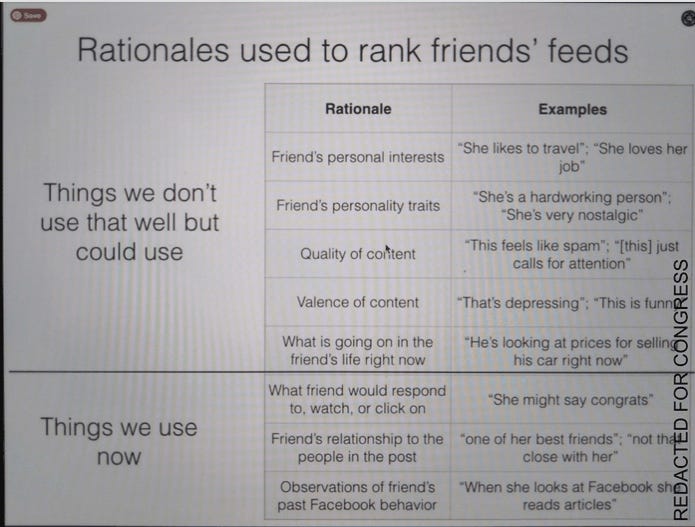

The real takeaway from this study was an early recognition that the Facebook algorithm was disproportionately serving users news content that, the algorithm believed, they wanted to see.

Liberals, for example, were 8% less likely to see the non-liberal news articles their friends had shared, whereas conservatives were 5% less likely. But Facebook discovered that users were more likely to click on content that comported with their worldview. Even if the gap was hardly decisive — they engage with like-minded content in 87% of cases, and oppositional content 54% of the time — it was proof positive for Facebook that they could boost engagement by reducing how much ideologically inconsistent news and information they served.

There are a myriad of problems with this study. (See footnote.) But it continued a trend of Facebook staff boosting the idea that homophily is natural, even as their research showed it is not. Facebook saw this not as a problem to be fixed, but a success to be replicated. They wrote blogs explaining how their data showed cat people preferred cat people, dog people preferred dog people. When their data revealed that sports fans generally don’t pick their friends based on team affiliation, the researchers spliced the data and declared that because Saints and Seahawks partisans were tight with fellow members of Who Dat Nation and The 12s it was likely homophily.

Internal Facebook documents, leaked by whistleblower Frances Haugen, reveal that the company actually began calculating an “audience homophily score.” A 2017 internal post reveals that a high homophily score “have been found to be more likely to share misinformation and engage in destructive conflict tactics.” And yet they kept pursuing homophily as a way to boost engagement.

In 2018, Mark Zuckerberg announced that Facebook had a new policy: Deepening homophily. “We built Facebook to help people stay connected and bring us closer together with the people that matter to us,” he wrote. “That's why we've always put friends and family at the core of the experience. Research shows that strengthening our relationships improves our well-being and happiness.” (Spoiler alert: It did not.)

Zuckerberg lamented how politics and news — particularly short-form video from those players — had distracted users from their meaningful social interactions. (Not mentioned is the fact that Facebook was the one counselling media and political parties to produce a massive quantity of this video, and penalized them if they did not.)

It’s a perfectly laudable goal to help people connect with their families. But the problem is that Facebook does not know who matters to you. It can only guess, based on a series of data points that — thus far — had proven deeply unreliable. And instead of letting its users choose their own community, Facebook set out to create a new Craftown.

At the same time, Zuckerberg’s platform continued rewarding high-emotion content — anger, in particular — in its ranking algorithm. So your news feed became like holding a family retreat at an Ikea furniture-building carnival.

The result, as you can likely predict, was a disaster. It made Facebook a meaner and nastier place. “Misinformation, toxicity, and violent content are inordinately prevalent among reshares,” an internal memo revealed of Zuckerberg’s algorithmic change, as published in the Wall Street Journal.

And, again, this is not what people wanted. When Facebook noticed a steep decline in user satisfaction in the news feed, they found that unhappy users reported a myriad of complaints. The two of the most comment gripes? “I want to see the newest stories first” and “I see the same posts from the same people and pages.” They were telling Facebook loud and clear: Stop giving me the content you think I want to see, from the people you think I respect. Stop with the homophily.

Over the following year, the negative ramifications became even more clear.

“Engagement on positive and policy posts has been severely reduced, leaving [political] parties increasingly reliant on inflammatory posts and direct attacks on their competitors,” a 2019 internal report reveals. “Many parties, including those that have shifted strongly to the negative, worry about the long-term effects on democracy.”

Facebook spent most of the 2010s trying to imagine the narrow niches into which we fit, and pushing and pulling levers that would help turn those identities and values into community. But it was all imaginary. Facebook no more understood the things that connect us to our friends than Lazarsfeld and Merton understood what connected or repulsed the Black residents of Hilltown. But the sociologists were driven by curiosity. Facebook was driven by profit.

Last week, the journals Science and Nature published four peer-reviewed studies, written by researchers who had been given extraordinary access to Facebook’s internal data.

In lauding the publication of these studies, Meta published a sneering, insulting, disingenuous blog that rejects their own agency in breaking our brains.

“Does social media make us more polarized as a society or merely reflect divisions that already exist?” the post asks, rhetorically. “Does it help people become better informed about politics, or less? How does it affect people’s attitudes toward government and democracy?”

We know, from Facebook’s internal communiqués, the answers to those questions: Yes. Less. Negatively.

The studies reviewed the fractious nature of Facebook’s climate: A polarized, angry platform rife with misinformation and ideological segregation. But they all seemed to come to the same conclusion: It doesn’t really matter.

One study contrasting the results of algorithmic and chronological feeds looked at impact on users’ politics and found “did not cause detectable changes in downstream political attitudes, knowledge, or offline behavior.” Researchers’ attempt to reduce the amount of worldview-confirming content produced “a consistent pattern of precisely estimated results near zero.” An analysis of the impact of reshares was “not able to reliably detect shifts in users’ political attitudes or behaviors.”

Those findings should cause huge sighs of relief inside Meta headquarters. They can spin these results as vindication. They are anything but.

In those three studies, the authors offer the same possible explanation: That Facebook users are already so polarized that any changes to the platform are meaningless. Facebook drove us apart, into these agitated clusters of comforting rage, as much as it was able. Its building of homphily is complete.

This is not to argue that Facebook’s homophily-splattered algorithm is a unique evil in the world. Whilst it may have contributed to a genocide and undoubtedly helped elect Donald Trump, Meta is merely the embodiment of an internet designed to force us into small groups of furious actors. It is one player, operating on this faulty belief.

The afterlife of homophily, Chun and her research colleagues wrote in their seminal piece on the Craftown-Hilltown study in 2019, “has been remarkable, effectively reconstructing social worlds in its image.”

Homophily is not a state of nature but, as Chun calls it, a technique:

Many techniques to create polarization seek to undermine commonalities and structures that foster equality. Importantly, there’s a difference between homophily and community, even though the former is used to explain and undermine the latter. Homophily makes everything about individual choice and thus glosses over the effort and infrastructures to create community—and also sustain discrimination. What’s also dangerous about polarization and network neighborhoods as they exist now is that they are segregated. What is missing are these kinds of engagements or what Danielle Allen would call talking to strangers, which is the fundamental negotiation of democracy.

The grand irony is that the researchers at the heart of this grotesque afterlife warned us of it.

Merton, the sociologist, wrote how white residents of Hilltown were more likely to report racial animus in their new community if they went in expecting it. And if you move into a new neighborhood expecting to hate your neighbors, odds are that you won’t get along well with the family next door. At least not at first.

“The self-fulfilling prophecy is, in the beginning, a false definition of the situation evoking a new behavior which makes the originally false conception come true,” Merton, the sociologist, wrote. “The serious validity of the self-fulfilling prophecy perpetuates a reign of error.”

But we also have the benefit of the 20th century to show us that integration was a magic bullet for close-minded politics. Shared housing projects, bussing, immigration, women in the workplace, the emergence of gay villages: These disruptive changes to our real world and actual communities may have prompted backlash initially, but they engendered understanding and complex new relationships. They broke homophily.

But Facebook, and its successors, engrossed themselves in that 1954 way of thinking. They fell victim to that reign of error, and made the faulty prophecy of Hilltown self-fulfilling. They operated on the idea that we would hate people who don’t look and think like us, so they inundated us with people who do — or, at least, who they think look and think like us.

We were told so many times that birds of a feather flock together that we accepted it as natural. Birds of a feather join the same Facebook group. Birds of a feather quit Twitter in unison. Birds of a feather join Gab. Birds of a feather change their profile pictures to black. Birds of a feather cyberbully the woman who posted something untoward. Or, perhaps most commonly, birds of a feather merely unplug because it’s exhausting.

But we know this is directly contrary to our very instincts, and it’s not how we live our real lives. Community and collective action are a part of the human experience, sure, but they can’t be the only part of being alive. No movement — political, social, even fandoms — can be purely insular. So why do our online selves live in unreal, segregated, villages?

This is not, by the way, some optimistic appeal to reach out and engage with people who disagree with you online. That is a good onto itself and may help, sure. But this is fundamentally a problem beyond our individual abilities. We can no more fix how communication happens online than a train passenger could improve working conditions for rail workers in 1889.

Someday we will look back and take stock of the damage done by this decade of corporate greed masquerading as community-building. Perhaps in the rich world that damage was largely limited to some tumultuous politics and a legacy of polarization, but there are countries where nascent democracies were damaged, even broken, by an insidious technology whose inventors neither understood nor tried to understand its powerful effects until well after the hurt was caused.

The most encouraging thing to emerge from the spate of recent research is that Facebook itself may not matter much more. And we, as as species, seem to be growing more literate and skeptical of the impact that social media has on our psyches and systems. And both the social media robber barons and the regulators seem to be getting wise to the dangerous impact of these technologies. There is reason for optimism that our future systems will give us more freedom to choose our communities, pick our friends, and exist in the conversations we want to be in. But it may take some time.

Yes, we’ve been dropped from a great height many times in a row. But people eventually learn to bounce.

That’s it for this special edition of Bug-eyed and Shameless.

I’m opening comments to everybody, in case you have thoughts, comments, feelings, recriminations, or funny jokes to be made about Far and Widening, or this post.

Until next time.

The researchers gave pseudonyms to the towns. Craftown was Winfield Township, NJ; and Hilltown was Addison Terrace, in Pittsburg.

I’ve seen some conflicting accounts as to whether Addison Terrace was properly de-segregated, or merely multi-racial. So white and Black residents may not have occupied the same buildings, but they shared the community.

It was $1 trillion until recently, anyway. It doesn’t really matter. Take it from a former VICE employee: Valuations of internet companies mean nothing.

There is a fatal flaw in this study, worth noting: Facebook could only study those who listed their political affiliation in their profile. A paltry 9% of respondents did so. So this data is worthless for any actual study, but it is instructive to reveal how Facebook thought about these issues.

A study a few yrs ago pointed out that if you have engaged family and friends then FB et al strengthens the relationships. If you don't have family and friends it contributes to isolation , mental health issues.

Thanks for sharing this. My day job requires that I interact with many, many different types of people, face to face, in unexpected intervals. This means that I interact *mostly* with people who aren’t like me. I find it invigorating and beautiful.

And then I go online, and I’m horrified by how boring and unanimous it all is. I find myself arguing that it’s worth getting to know people who aren’t like us, and I’m met with outraged indifference. (I’m the one giving the hopeful “have conversations with different types of people” message you allude to at the end of this essay.)

I have more thoughts, but I will leave it at that. Thanks for writing this!