We Lost the Battle Against Misinformation

And maybe that's okay.

I was sitting in my office on a quiet Saturday afternoon in January when my phone rang. I looked at the caller ID: “SCIENTOLOGY MISSIONS INTERNATIONAL.”

I sighed and answered the phone.

On the other end of the line was David Bloomberg, an executive in the secretive church and head of media relations for its sprawling religious-corporate empire. And I knew exactly why he was calling.

In that morning’s edition of the Toronto Star, I had a column decrying the Canadian government’s wrong-headed approach to fighting hate crimes. Ottawa had decided to emulate Germany and the United Kingdom by attempting to criminalize all manner of symbols associated with hate and terror, to adopt a whole new class of hate crimes, and to forbid any protest that may “provoke a state of fear in a person” enough to prevent their entry into a place of worship. It is bone-headed state overreach, I think.

“How would the law apply,” I wrote, “if a Jewish group protested outside a synagogue at which Israeli real-estate firms were marketing illegal West Bank settlements? Or if Muslims picketed an event at a mosque held by a marginal group known to glorify terrorism?”

In my first draft, the examples had ended there. But I am a slave to the rule of threes, and set my brain to thinking of a third case where such a law might be problematic. I came up with, what I thought, was a sterling way to complete the trio: “What about protestors demanding to know ‘Where is Shelly Miscavige?’ outside the Church of Scientology?”

I expected plenty of complaints from defenders and critics of Israel for the column. I should have known I was far more likely to hear from Scientology HQ. Sure enough, Bloomberg was not happy.

Miscavige, wife of the church’s high-profile leader David Miscavige, has not been properly seen in public in decades. Her virtual disappearance has become a meme with which to mock the church, and to highlight its cultish inclinations.

Bloomberg was calling to tell me that was all bullshit. The LAPD had checked out the claim, he said, and declared it unfounded. He told me that ex-Scientologist-turned-critic Leah Remini — who had filed a missing persons report for Miscavige after she had disappeared from public view for six years — was unstable and unreliable.

Repeating such a statement, Bloomberg told me, was dangerous and irresponsible. It opened up Scientologists to harassment and violence. It would be akin to uncritically quoting a dispatch from the Ku Klux Klan or amplifying the Protocols of the Elders of Zion.

I tried to stifle my astonished laughter. “Dave, come on,” I managed.

After the conversation went around a few times, Bloomberg mentioned he had consulted some various thoughts I had posted online critical of Scientology over the years. I must have some kind of “animosity” towards the Church, he said.

With the conversation reaching 15 minutes, I told Bloomberg that his call was in vain: I would not change the text of my column. It was fair comment, it accurately described a protest movement which — right or wrong — shouldn’t be censored, and I happened to think that the question at issue was a valid one. “Shelly Miscavige hasn’t been seen in years,” I noted. (For more on that, I suggest reading Yashar Ali’s multi-part series on the topic.)

Bloomberg eventually followed up with me over email. “The statement in your article is demonstrably false and should be removed,” he wrote. “At a minimum, if you choose not to delete it entirely, you need to include a parenthetical noting that the LAPD officially deemed the report ‘unfounded.’ Leaving it as-is misleads readers and misrepresents the facts.”

After firing back a terse email — letting the Scientology exec know that I do not appreciate being told what I must do at a minimum — I chuckled to myself.

For years, I’ve covered the information beat. I’ve penned deep dives on the scourge of misinformation for major international papers, provided analysis on campaigns sophisticated and slapstick to delude us en masse, and delivered speeches to industry and government aimed at priming them on the state of our toxic information problem.

And now, Scientology says, I’m the problem.

Complaints from flack to journo are as old as the profession itself. But things have shifted in bizarre ways since “fake news” was declared word-of-the-year by Collins in 2017, followed by “misinformation” by dictionary.com the year after.

These words — and the concepts which have sprung up to both perpetuate and combat them — are emblematic of our excessive-information age. Everything is wrong and nothing can be trusted. All information meant to mislead and deceive. Everyone has secret motives, and must be approached with hostility.

Plenty of smart people acknowledge this reality and yet remain awfully sure that, with the right combination of grit, technology, and institutional buy-in, we can put an end to the scourge of fake news, misinformation, disinformation, conspiracy theories — whatever you want to call it.

But this year’s pick from Merriam-Webster — “slop” — should be an indication that we have melted into a bubbling tar pit of nonsense, fakery, and bullshit. There is no amount of fact-checking, media literacy, or platform moderation to solve the extent of this problem. No volume of good journalism or education campaigns will unscrewup our toxic information ecosystem.

This week, on a very special Bug-eyed and Shameless, I’m here to wave the white flag. We’ve lost the battle against misinformation.

And maybe that’s okay.

It’s All Slop To Me Now

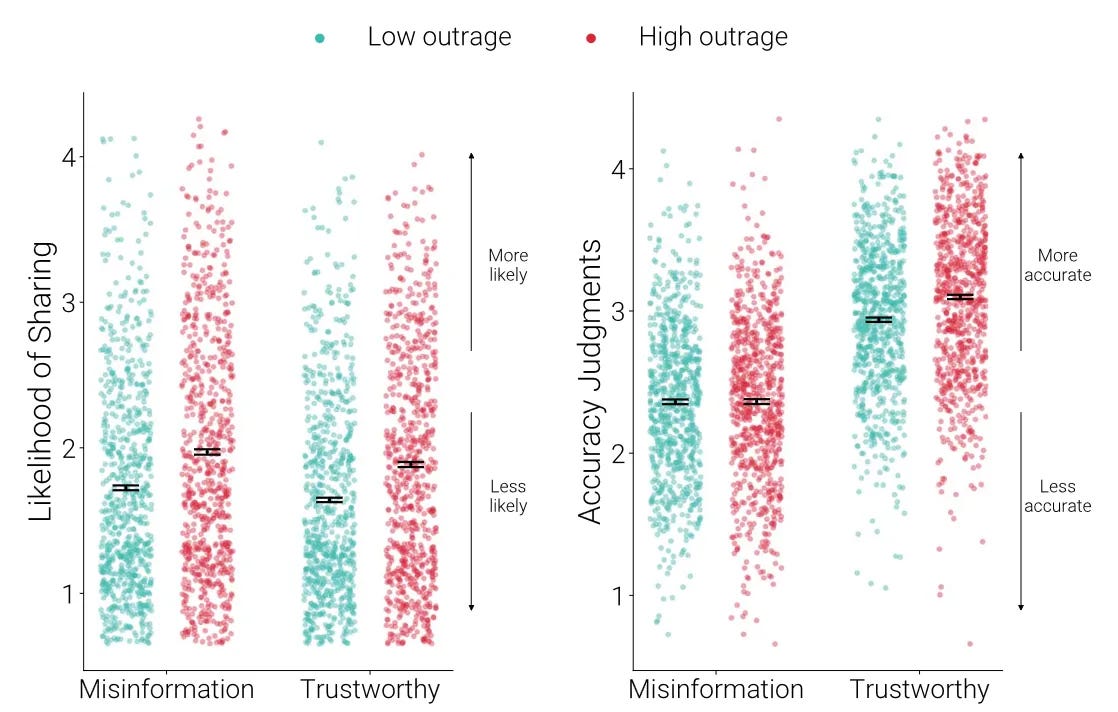

It’s 2024 and 730 American partisans are being presented with 20 headlines. Of those, 10 are legitimate news and 10 are bullshit. Half of those participants are being shown headlines designed to evoke rage, the other half are shown headlines with no particular emotional appeal. And they are each asked the same two questions:

“To the best of your knowledge, how accurate is the claim in the above headline?” and “how likely would you be to share this news article on your social media account?”1

This study, conducted by researchers at Princeton and Northwestern, should have shown something quite simple, if our popular understanding of misinformation is correct.

It should have shown a very tight relationship between a misjudgement in the accuracy of the article, the emotions it stirred up, and the propensity of one to share it.

But the researchers actually found that outrage had very little impact in how participants judged the accuracy of news articles, and that people proved quite good at separating fact from fiction. Study participants correctly reported “Marco Rubio Says That Felons Should Get Gun Votes” was misinformation and that “Trump: Jewish People Voting for Democrats Is ‘Great Disloyalty’” was a real news article. Simply as a test of whether people could sort misinformation from truth, even when the misinformation provokes a strong emotional reaction, the participants passed.

But, the researchers found, a worrying number of study participants went and shared this misinformation anyway. Particularly if it made them mad.

“We speculate that outrage-evoking misinformation may be less reputationally costly to share than other types of misinformation because of the signaling properties of outrage,” the researchers write. “If caught sharing misinformation, users can claim that they merely intended to express that the content is ‘outrageous if true’ preserving epistemic [accurate information] trust while bolstering their moral trust.”

These data suggest that people know they are sharing lies — but they do it anyway. Because the emotion it makes them feel upgrades this information to a truth beyond truth.

This one study adds to a mountain of other data points which clearly show that misinformation is not a sudden miasma that infects us as we go through the world. It is not a foreign-born illness which enters the country when we least expect it. Rather, misinformation is intrinsic to how we relate to information, and particularly how we deal with each other online.

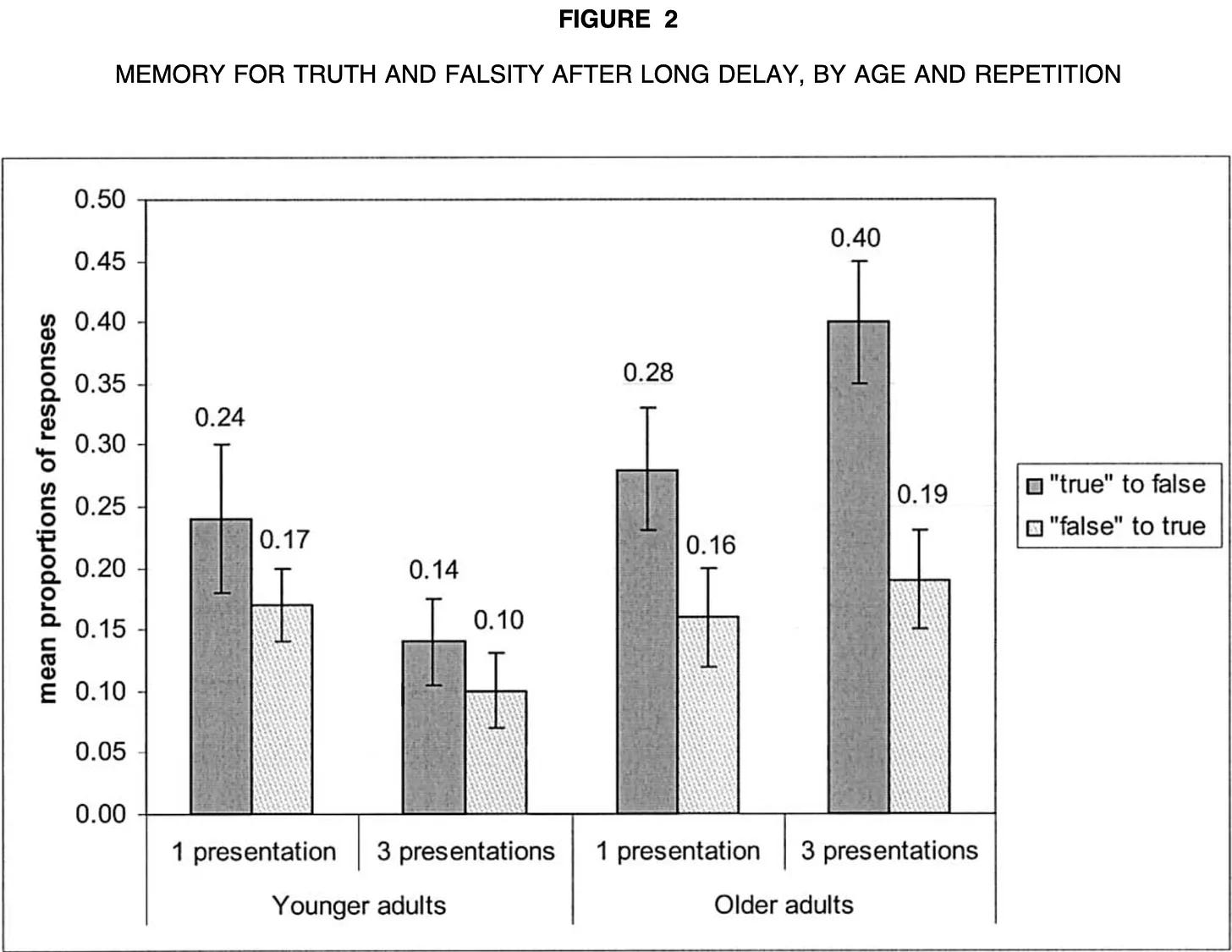

Let me hit you with one more study that illustrates our weird relationship with information. It was published in 2004, by a research team in Toronto. They had recruited a cohort of participants, half younger and half older, and presented them with a series of pretty arcane health statements. Things like “aspirin destroys tooth enamel,” “corn chips contain twice as much fat as potato chips,” and “shark fins can treat arthritis.”

The participants were asked to encode each of these statements as either “true” or “false.”

They did this a couple of times, and logged their answers each time. Then the researchers gave them the right answers. No, shark fins don’t treat arthritis. No, aspirin doesn’t destroy tooth enamel. So on. And they were asked to rank the statements again.

Then they were invited back three days later to assess the statements again. At this point, they had heard these truths and fictions again and again and again and they knew what was right and what was wrong.

What happened? The study participants began consistently mischaracterizing false statements as true, and vice versa.2

The experiment had revealed an “extremely undesirable, and previously unidentified, side effect of warnings: the more often older adults were told that a claim was false, the more likely they were to remember it erroneously as true after a 3 day delay,” they wrote. (While the researchers fixated on older adults, the data themselves show that this is a cross-generational issue.)

People walked into that study pretty sure that shark fins didn’t treat arthritis. They were told repeatedly that shark fins do not treat arthritis. And they walked out of the study believing that shark fins treat arthritis.

There is a lot of other data which support this idea, but put simply: We believe things if we hear them enough, and we are more likely to act on bad information if it stirs the right emotions in us.

These things are, seemingly, innate to the human psyche. But neither was a significant problem because we had systems of information that continued to reinforce what was true and what was false. And because we had a relatively limited amount of information we needed to know.

If you believed shark fins treat arthritis, your doctor could set you straight. If you suspected that something was fishy about 9/11, there was voluminous reporting detailing what actually happened. Most any bit of misinformation you may glom on to was likely some combination of marginal, harmless, or easily corrected. Even if you were inclined to share misinformation, you would be limited by how many people you were physically capable of sharing it with.

Misinformation, in short, had very little viral potentiality. And we had systems meant to keep it that way.

That’s not the world we live in today. With institutions supplanted by the internet, we live in a time of hyperinformation, delivered without intermediary. It is simply impossible to separate truth from lie in realtime. We are exposed to so much information day-in and day-out that we are required to utilize shortcuts to determine what we care about, what we engage with, and what we believe.

Some people continue to trust only what comes from official and reputable sources, disregarding all the rest. Some pick a handful of useful intermediaries, analysts, or influencers and ape their information diet. Others radically distrust everything, letting their gut and emotions decide what is true and what is not.

While there had been merit to all these approaches, none of them are good enough any longer.

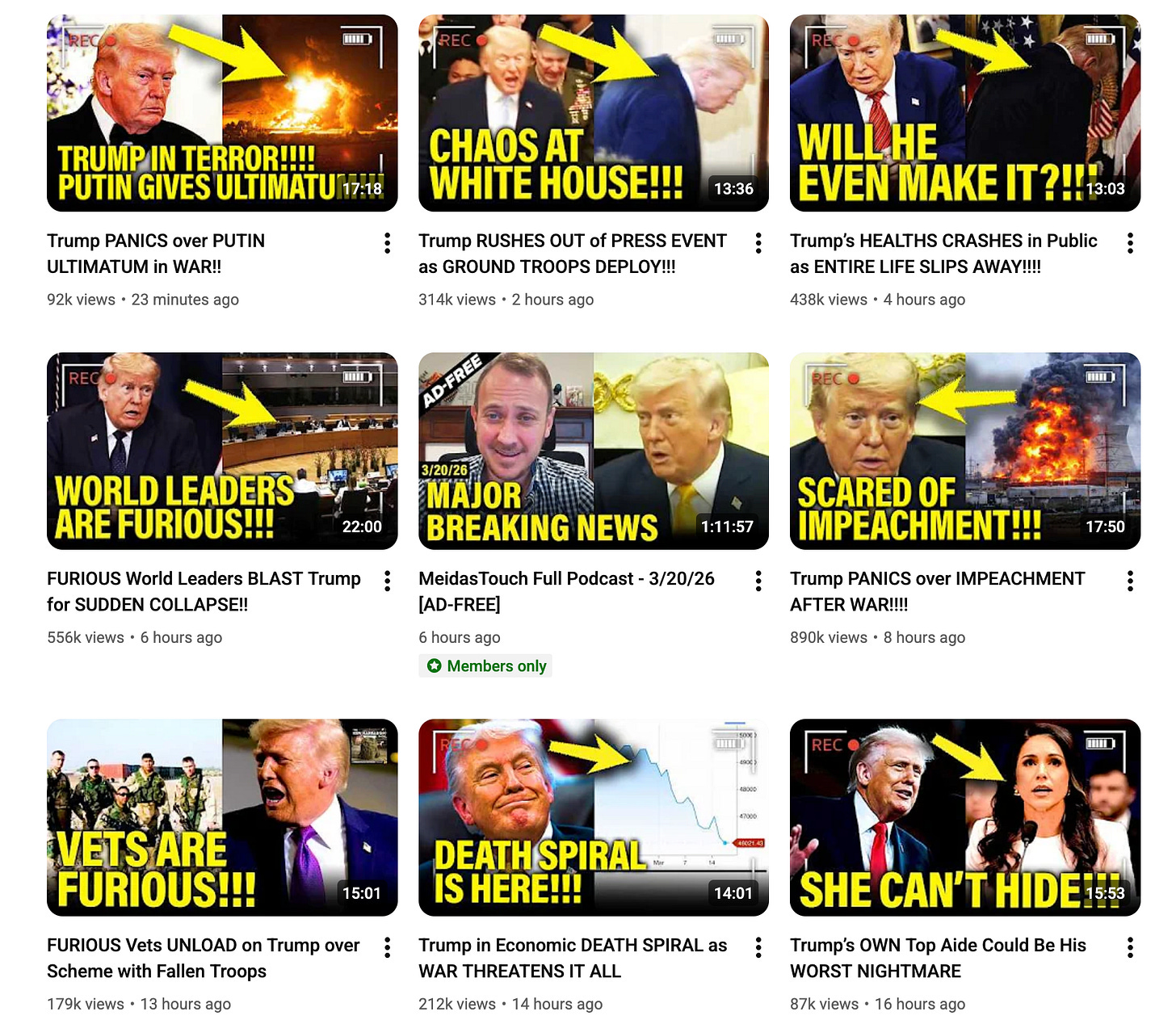

The mainstream press in most Western countries has been systematically de-funded throughout the 21st century and, worse, they (we) have mimicked the tactics of the online firehose of information to try and compete for readers and viewers’ 24/7 attention. News is now, increasingly, a perpetual BREAKING NEWS chyron, rolling liveblogs of events big and small, and articles designed to maximize engagement.

The influencer economy, which exists ostensibly to make sense of the deluge of information and news being produced, has grown saturated, requiring participants to increase output and ratchet up emotion. There is a constant race to get you to open every email, read every video, share every TikTok, listen to every podcast. The influencers are not simplifying an overheated information economy, they are merely adding to its dizzying volume.

Social media begat these changes but is a major contributor in its own right. Facebook, Instagram, TikTok, and YouTube all, in their own way, encourage rage, slop, lies, and addiction. Their goal is to encourage all content which makes users feel that their engagement is necessary and important. The oligarch-owned tech platforms want to bombard users with content about the chaotic state of the world not because they want to inform, but because they want to make users angry. Anger, we know, increases engagement. And, particularly as surveillance helps these platforms perfect their algorithms in an era of ubiquitous short-form video, they are getting very good at this.

The Trump administration, meanwhile, has blessed Big Tech’s mass-prescribing of opiates to the masses while bringing influencers closer and pushing journalism further away. The White House’s Twitter account and a band of conspiracists in the press pool have become far more important for the administration than the New York Times, and the public has largely gone along with this change. His oligarch friends have managed to dismantle institutions like CBS and the Washington Post from within — not even bothering to turn them into a sycophantic press. That role is reserved for the predictable slavish obedience of Fox News and for the aforementioned army of digital soldiers, each feeding the high-velocity content machines.

I say we lost the battle against misinformation because there is no civil society or regulatory remedy to undo this, particularly not when the primary regulatory jurisdiction, the United States, is led by a man who is uniquely responsible for worsening this problem and benefiting from it.

This is on full display in the war on Iran.

There’s the White House mixing old baseball clips with footage of airstrikes in Iran. There’s the Iranian regime as cartoon bowling pins. There’s a war hype video borrowing cutscenes from Call of Duty. There’s a deluge of AI-generated videos of missile strikes and terror, created by a plethora of different actors with varied motivations.

“Polls show that a lot of young people are actually somewhat supportive of this war and our goal is to deliver content to them,” a White House official told Politico this week. (But when the New York Times posts an image from Iran, it gets bombarded with accusations of digital manipulation.)

This is perhaps the most obscene example yet of our jittering in-and-out of reality. The administration is relying on memes and slop to sell a war, yet there are still serious people telling us that this is a problem of our moral failing, or of journalists who just can’t move fast enough.

“Despite daily debunking efforts from people like [BBC Verify senior journalist Shayan] Sardarizadeh, new fakes are popping up far faster than they can be swatted down,” lamented CNN’s Daniel Dale earlier in March.

Dale continues: “For those of us who can’t avoid frequent scrolling, it’s wise to take a beat and do even a few seconds of online searching before believing or sharing a sensational wartime video or image.”

With all due respect to my colleague: Fuck that.

If you turn to Google to check a particular bit of misinformation, you are going to be greeted with the company’s AI model: One that is absolutely prone to repeating, even creating, misinformation. We learned this week that Google is using AI to actively rewrite news results. It, like its social media brethren, is more keen to keep users in its ecosystem than in delivering them reliable news content.

Even if Google were a reliable avenue to fact-check the onslaught of information we are told to verify, it would require us to spend half our days kicking the tires on each video, image, and claim. And in trying to do so, we run into the same original problem: Who can you trust online these days?

Individuals cannot overcome the mounting pile of misinformation, slop, and lies. And we can’t hope that our governments will fix this for us.

The evidence tells us that even if we could get our media to debunk every single fake image, and get a few platforms to label every AI-generated airstrike video, flag every deepfake, warn users of each false narrative — it would not truly matter. People would share it anyway and bad actors would continue producing slop for likes. For some, the debunks would become evidence that the information strikes at some emotional truth. For many, they would internalize that fact-check as just another piece of information to be sorted, categorized, and promptly forgotten.

I have written a lot on this newsletter about how the internet makes us mad (Dispatches #64, #110, #144), how it lies to us (Dispatches #90, #141), how the oligarchs who run it want it that way (Dispatches #47, #80), and how our attempts to fight back against its negative externalities have been limp and wrong-headed. (Dispatches #68, #85, #102, #104)

I have lost whatever optimism I once had that we could do battle with the forces of misinformation and win.

And frankly? It’s liberating.

Because it really simplifies what I think we need to do next.

“The Sisyphean Cycle of Technology Panics,” Where We Finally Get the Boulder Atop the Hill

If I may get metaphysical for a moment.

We live in an era where we are fed gigabytes of information each minute — and where we can access petabytes more whenever we want. Driven by curiosity, boredom, anxiety, obligation, anger, and any other combination of emotional states, we read, watch, listen, and engage with more information in a day than past generations consumed in a week, if not a month, if not their whole lifetime.

And with that, information becomes more abstract. The matter loses its relationship with the form of the information. Wars, plane crashes, cat pics, cellphone videos of a violent assault, fail videos, memes — they become digital objects onto themselves, existing apart from the real-world things they describe. And with that, it becomes easier to engage with those digital information objects based on the emotion they provoke without bothering to engage with the real things at their origin.

I’ve explained, in the previous section, how this system is being weaponized and expanded. But the simple fact is that we were always heading in this direction. While we could have made a lot of choices, early on, to prevent the worst version of this reality, it seems inevitable that the internet would abstract our relationship with reality, making our emotional connection with these totems of information more important than the real things it describes.

Artificial Intelligence widens this gap in bizarre new ways. We’ve long ago closed the uncanny valley, allowing us to replicate real-life with computers, but now we’re automating the creation of the unreal. Whereas life-like animation and deepfakes used to take a fair bit of doing, anyone anywhere can now spit out images, video, audio, music that emulates the real thing. It may be full of weird artifacts and lack soul, but this technology has simultaneously multiplied the amount of information being generated every second while widening the gap between real and not. And in so doing, it makes all information less real. Existing online now requires you to look at every photo, watch every video, listen to every song and think: Is this real life?

What’s more, decentralizing the creation of this information to any and everyone means it can be created, freely or cheaply, for any purpose. It can be generated to entertain, to deceive, to rationalize a psychotic break, to obscure a crime, to gin up anger, or with the express purpose of making others lose their mind.

In the past, new technology which abstracts the real world has been met with skepticism. And, in hindsight, those worries look silly.

The Western world once criminalized ‘crime comics’ out of fear that fictionalized noir would push youth to a life of crime. Parenting magazines once lamented how the disembodied voice of radio “comes into our very homes and captures our children before our very eyes.” Movies, television, video games: They all prompted waves of paranoia that they put humans into the land of the unreal, making them vulnerable to all sorts of psychosis and corruption.

Psychologist Amy Orben coined this very human habit “the Sisyphean cycle of technology panics.” She, correctly, notes that humanity has gone through this reactionary process many times before — with the public and media fixating on real and perceived problems, followed by governments flailing about in trying to internalize those externalities. Orben makes a strong case for us to get better at collecting actual evidence about the harms of new technology, but I think we can safely say that many of these past panics proved overblown.

Yet, and I know all panickers say this, we have reason to believe this time is different.

Not only have we plugged ourselves into far more information than our species has ever had to process, not only have we uploaded much of our politics and media to this new system, and not only have we allowed a small number of oligarchs take control of this system — we left it vulnerable to exploitation. The owner of every major social media company and most major AI firms have made a clear alliance with the Trump White House. The U.S. government has broken free from all its confines, and nobody seems willing or able to stop it.

This is already having global ramifications. The United Kingdom was racked, in 2024, by race riots egged on by Elon Musk, fed with a pipeline of anti-migrant misinformation, and promoted via Twitter. Rather than reckon with the destructive nature of those riots, the UK looks excited to elect one of its chief proponents.

Much of Europe, France and Germany in particular, looks set to enter the embrace of an uber-online far-right, each promising to bring order to the chaos — the chaos they play up on their Twitter feeds and in their slick YouTube documentaries.

Australia has long been resilient against this slopulism. But recent polls show Pauline Hanson, leader of the far-right One Nation party, surging in popularity. Hanson asks voters to reject the complexity of this hyperinformation system by relying on “common sense,” even as she markets wildly complex theories — including how the government staged a mass shooting attack to steal everyone’s guns.

The rise of these charlatan politicians isn’t just a byproduct of bad information, it is the result of too much information. People are overwhelmed, anxious, and angry. They are opting for simplicity. (And anger.)

To that end, there has been a new quest to simplify information. Maybe we can have a singular source of truth, the thinking goes. And maybe that can be AI.

And yet again I say: Fuck that.

If we opt to turn ChatGPT into our new gatekeeper, an automated voice-from-on-high who can separate the wheat from the chaff and tell us which way is up, we deserve what we get.

LLMs and chatbots are both inherently reliant on journalism to function: They are leeches. They take objective reporting — which may not be truth but at least tries to be true — and mixes it with social media chatter, low-quality tripe, and actual misinformation. The result is slop, of varying degrees. Worse yet, they are owned and operated by firms who have already shown a willingness to manipulate their responses to maximize engagement and advance ideological objectives.

Put simply: ChatGPT is primed to drive us mad, just as Facebook did. Probably moreso.

I saw a billboard recently, for Bell Canada’s new sovereign AI somethingorother. It said something to the effect of: “Imagine if every fact were already checked.”

I don’t have to imagine, I remember it well. It was a thing called the newspaper.

There is a clear off-ramp to our informational hell: Consume less information.

The wonders of the morning newspaper and the evening newscast were that they asked only an hour of your time. You had no obligation to check the newspaper every five minutes to consult what has changed. There was no moral imperative to stay glued to your screen to hardwire into new developments.

We are not smarter or better off because we are processing a constant stream of news, geopolitics, health information, celebrity gossip, social media bickering, stock tips, Polymarket bets, cultural clashing, and so on.

Yes, the internet has decentralized the conversation and given all of us the responsibility of establishing a shared sense of truth. Are we happier because of it?

I say no. I think if any of us were given the power to return newspapers, academics, doctors, and experts back to their central role of arbiters of truth, we’d do it in an instant.

But there’s no way to do that, unless we do a bit of information devolution. We need media outlets, politicians, organizations, and institutions who are more and more willing to engage with people offline — or, at least, far away from the toxic information systems on which they remain.

We need to go on an informational diet. We need to become more discerning with what we want to know, and what we need to know.

Because there is still a war to fight. And the only way to win the next battle, and the one after that, is if we stop fighting on the unreal fields of the oligarch-and-despot-dominated internet.

That’s it for this dispatch, which I’d been trying — and failing — to write for some months. Some readers may find it a bit repetitious, given past dispatches, but you’re witnessing my Luddite radicalization in realtime, so be patient.

For those interested in reading my columns over in the Star, here are some gift links: To my recent chat with NDP leadership candidate Avi Lewis; a dissection of one big Trumpian lie on the war in Iran; and a screed against AI.

If you haven’t already, please subscribe to Soft Power. I’ve got a new dispatch coming very shortly.

Meanwhile, I’m trying to get back on the horse and make sure there is steady and regular Bug-eyed and Shamelesses in your inbox. But also, in keeping with today’s dispatch, not too many and not too much.

Until next time!

Misinformation exploits outrage to spread online, Killian McLoughlin et al. (Science, 2024)

How Warnings about False Claims Become Recommendations, Ian Skurnik et al. (Journal of Consumer Research, 2005)

Good stuff. The amount of crap makes it hard to find reliable info. To some degree, I think that was true with newspapers and television news as well. The key was to pick suppliers who you had reason to trust. So, I'm not sure that the trust issue has really changed, but as you point out, what has changed is the amount of information we are bombarded with. And the amount of AI slop makes it even harder. Keep fighting the good fight, I will keep reading.

Here are my suggestions for retaining sanity despite the chaos and manipulation Justin describes so eloquently:

1. Stop scrolling. Abstain from social media altogether, except to keep in touch with distant family members or friends.

2. Say goodbye to Google Search and use Kagi instead (https://kagi.com/). Try it out for 100 searches and then gladly pay US$6/month to search without ads, tracking, or noise — and with AI results totally optional.

3. Limit news intake to a few trusted sources. Avoid speculative opinion.